|

So there was no "illusion" of detail as you say taking place with Selective Integration techniques, the detail was always there hidden by some forms of "noise", and the techniques just allowed it to be reveled. The result was amazing!! Then took some images of other "special" satellites which got him a visit from some "special" folks He then selected and aligned individual video images (Selective), co-added them (Integration). Way back an ameuter astronomer in Boston used Selective Integration techniques of alignment and addition to show images of the International Space Station taken at 12 noon, yes mid day, from the top of the Boston Science museum!! He used a Meade 12" Schmidt-Cassegrain telescope and took images with a simple security camera recording on a video recorder. We used this back in 1980 as mentioned with 16 samples, thus getting a potential 4X advantage in SNR. This was the concept of doing signal alignments before the signal averaging, and even throwing out really bad samples that couldn't be properly aligned, and only then doing the signal combining. Some time ago, the term Selective Integration appeared. This concept or derivative of such are utilized in all sorts of signal processing today, from wireless communications to advanced satellite and astronomy image processing (kinda looking down, looking up ).

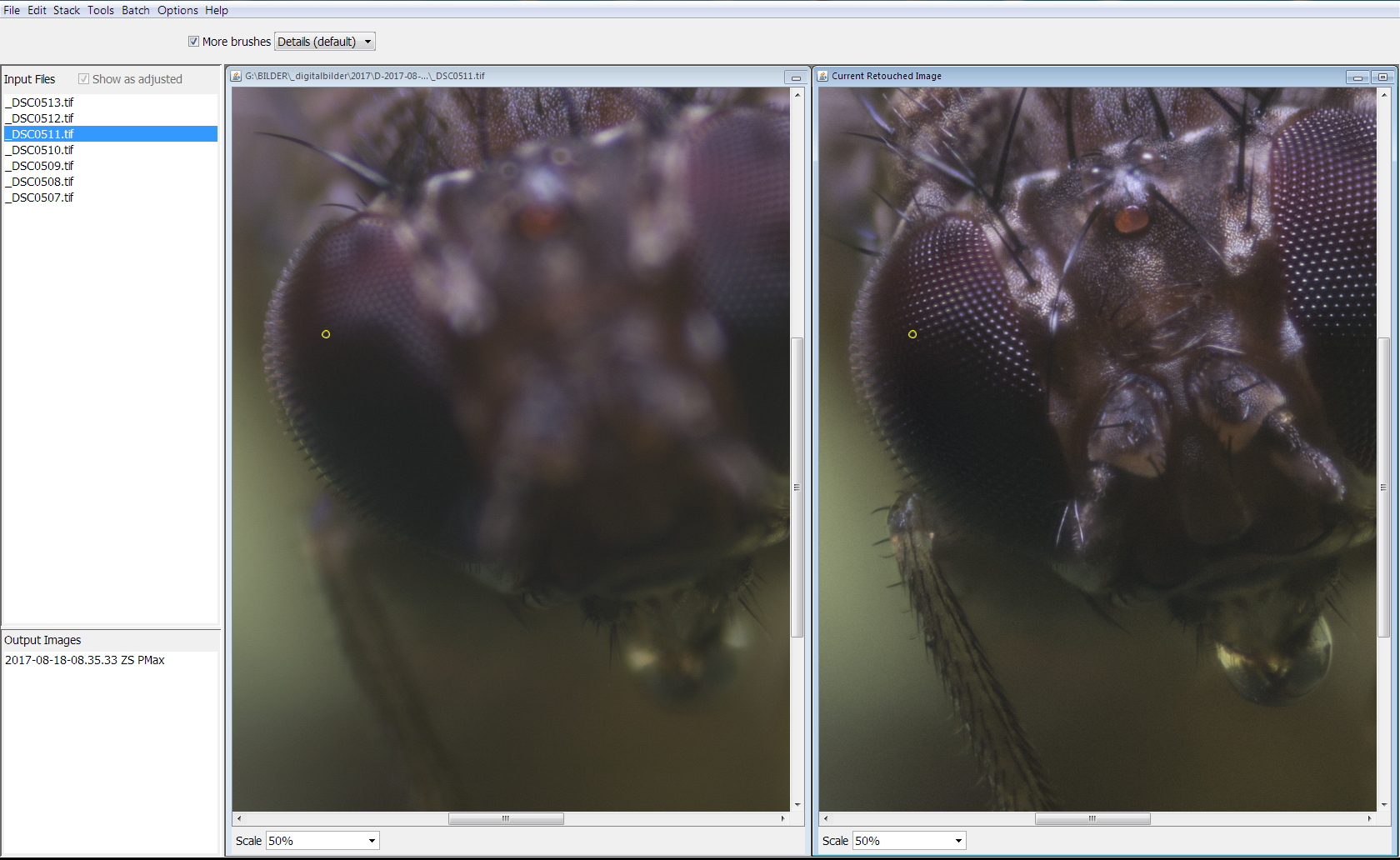

Signal processing indicates that the resultant quality grows as the square root of the number of samples because the coherent parts (like an aligned) of the signal grow linearly and the non-coherent (movement, noise and whatnot) grow as root of the sum squared. Sometimes you can't do much to eliminate these effects, agree if you can do so. One of the prime functions of any modern stacking software package is account for image moment during the alignment phase, that movement can be caused by many factors, base movement, lens movement, for here maybe atmosphereic effects (apparent image movement), subject movement and so on. IMHO you'd have a more accurate image if you physically compensated for, or tried to eliminate, those variables instead of making the shot artificially detailed in post. This is why some of the stacked images show less CA than the original images used to stack with, at least that's my limited understanding of what goes on behind the scenes with Zerene.Īll of that will give you the illusion of detail, because stacking won't actually compensate for those defects. My simplistic understanding is that some level of "averaging" is taking place within the algorithms, but not around the OOF areas, only within the IF areas. Rik Littlefield is the author and probably a good source for this question, certainly better than myself. Zerene has two algorithms used for stacking, one is Dmap the other Pmap, Depth and Pyramid based respectively. Does Zerine do any averaging or merely select the sharpest images? I have little understanding of exactly the Zerene algorithms operate, and which was used by the OP. We've actually used the later (not with Zerene though, custom created image stacking code) back in ~1980 to stack interferometer images that were slightly affected by the base vibration (battlefield instrument). Improve edge sharpness (if shot thru a refractor at high magnifications, where edges may be soft), reduce atmospheric effects by averaging, even improve image that suffers from random (uncorrelated to shutter period) mount vibrations.

Why would you need to focus stack a shot that was taken at infinity?!

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed